Another day, another data breach.

Isn’t this all starting to seem a little too familiar? I don’t know about you, but the endless parade of disclosures is taking up entirely too much space in my news feed, pushing out important information on giant arcade cabinets and open source espresso machines. How is this still such a problem when we’ve all moved on to strong, randomly-generated, single-use passwords stored in password managers and multi-factor authentication? (Hold on, you haven’t done that? Go take care of that right now! I’ll wait.)

Human Error

Well, what do all these incidents have in common (besides giving CISOs heartburn)? Human error. Regardless of any other measures in place, at some point a human was given the sole responsibility for doing the right thing and they fumbled it. Hey, it happens. Even the smartest of us are extremely fallible creatures and this should surprise no one. What should be surprising is how, even armed with this knowledge, we insist on adopting security practices that assume anything we can usually get right we will always get right. Can you imagine living in a world where that was true?

The initial foothold in most of these attacks was a successful phishing attempt. It might have been a counterfeit login page. It might have been a believable phone call from “customer service”. One way or another, someone was convinced to give out sensitive credentials to someone or something they shouldn’t have. It’s a classic because it works.

You wouldn’t fall for that, right? You always check the headers and never click the links. You always hang up and call them back at the official number. You haven’t opened an email attachment since ActiveX roamed the earth. (Wow, it still does. Who knew?) But do you ever get tired? Or busy? Distracted, stressed, even hungry? No?

I love the smell of swagger and hubris in the morning.

Can you say the same thing about every one of your co-workers? How about your customers? Picture the least alert person you can imagine using a system you care about, and ask yourself why the integrity of that system should rely on their attentiveness.

At least one of these incidents started with a push bombing. On the face of it those seem pretty easy to avoid, right? Just don’t approve MFA prompts unless you’re actually attempting to sign in. But there’s no rule that limits these attacks to times when you have your game face on. Do you really want to trust your security to your reactions when woken up at 3am by a nonstop stream of notifications, with your lizard brain still in charge of make bad noise stop?

Would you agree that a system with a temperamental meat computer as a single point of failure is suboptimal if there are alternatives? If so my friend, I think you’re ready to hear about phishing-resistant MFA.

What’s Wrong With Most MFA?

Time-based One-Time Password (TOTP) authentication relies on a shared secret and a visible code. Only your authenticator app and the service you’re authenticating with know the secret for generating the correct code at any given moment. The service asks for the code, you provide it, and that proves to the service that you are you. But you get no such assurance from the service.

This leaves you almost as vulnerable to phishing as if you weren’t using MFA at all.

Instead of convincing you to share only your password the attacker also has to trick you into sharing your code, but the only real obstacle is whether they can act on that code before it expires.

Another common approach is MFA via push notification. You attempt to access a service, it sends a push notification to your registered mobile device, you approve the access request, and that “proves” to the service that you’re the one attempting to log in. But as increasing numbers of push bombing incidents show, the fact that you were convinced to interact with a notification isn’t a guarantee of intentionality.

MFA via SMS, email or voice is a train wreck, with all the same vulnerabilities as the methods above and some exciting unique additions like SIM swap attacks. Friends don’t let friends MFA this way. Which is naturally why it’s the only form of MFA most financial institutions support.

Phishing-Resistant MFA

This term applies to two categories of authentication. PKI-based MFA (public key infrastructure, generally encountered as smart cards) has been around for decades. But since it depends on having that infrastructure in place, and strong identity management, it’s generally the province of government agencies and large enterprises and is less supported by the types of services many of us use. The odds are good that if PKI makes sense for you you’re already using it and are in a better position to write about it than I am. But do it on your own time.

A more appropriate option for most people is FIDO (Fast IDentity Online) authentication. Those links at the top of the post? I bet I snuck something past you. The last attack, on Cloudflare, didn’t actually result in a breach. Why not? Because everyone at Cloudflare authenticates with a FIDO2-compliant key that enforces origin binding with public key cryptography. Their write-up does a great job of explaining how the attack worked and how it would have played out if they were using standard TOTP MFA, but glosses over how it fizzled out when it ran into FIDO.

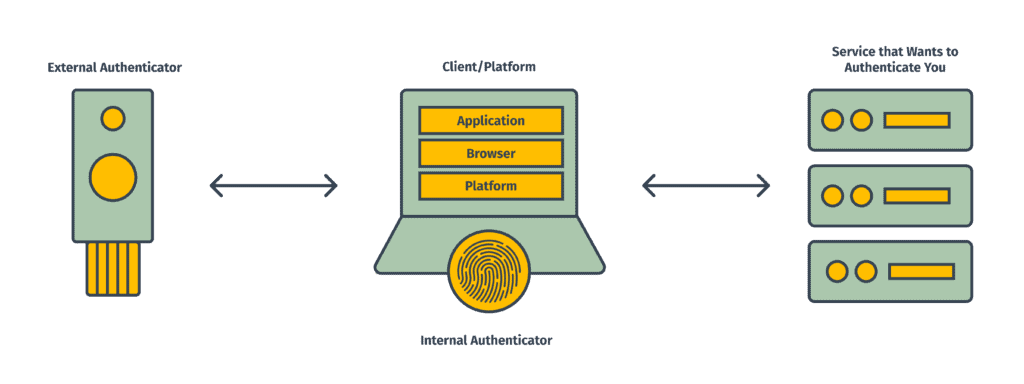

Unlike TOTP, FIDO doesn’t rely on a single shared secret known to both the authenticator and the service. When a hardware key is registered with a service the device generates a new public-private key pair. The public key goes to the service, while the private key never leaves the secure storage of the device, where it’s tied to the identity of the service.

During authentication, the service sends a challenge to the device. The device finds the private key tied to that service identity and uses it to sign the challenge. The service uses the public key to verify that the challenge was signed by the real private key and allows the connection.

This process delivers some very powerful assurances. There is no user-facing code you can be tricked into revealing. Only the private key can successfully sign the challenge, so the service can be sure the hardware key is authentic. But the device will only be able to find a private key for the exact service it was registered with. It’s not going to be fooled by a phishing site at the wrong url, regardless of how good a forgery it is.

The only way around the origin binding I’m aware of would be for the attacker to poison the victim’s DNS so their phishing site was accessible through the correct url for the real service and have a valid SSL certificate for that domain. That would involve a compromise of the user’s machine significant enough for the attacker to add their own certificate authority as a trusted root, or the ability to generate valid certificates for the service’s domain. If either of those are true you’re going to have a bad day regardless of the security process you’re using.

FIDO also sidesteps the issues with push notifications by tying the authentication mechanism directly to the device attempting to authenticate. The hardware key is plugged into the web browsing device (literally or wirelessly) and all interaction between the key and the service goes through the web browser, initiated only by the user’s actions there. There’s no question that the user (or at least the key) is in fact present at the point of login.

I’m sure by now you’ve come up with at least one reason why FIDO sounds nice but would never work for you. Come at me.

Does anyone even support this thing?

You’d be surprised. Microsoft, Apple, Linux and Android all support FIDO at the system level. Browser compatibility is strong: Chrome, Firefox, Edge, Safari, Opera, Vivaldi. The major cloud services providers are all covered, as well as common tools like Github and Dropbox.

All this sounds great for proving that the key is present, but how does it prove I’m the one using it? What happens if it’s been stolen?

That’s a great point. FIDO is definitely designed to counter remote attackers. Local attackers with physical access to your key aren’t part of the threat model the bulk of the specs are addressing. That’s why, even though FIDO2 in particular is touted as sufficient authentication unto itself, no passwords required, I myself would never go that far. This is where the “multi” in multi-factor authentication really comes into play. The hardware key is something you have, but I would still recommend requiring something you know, whether it’s a password on the account or a PIN on the key (which is absolutely something you can set). The options for unlocking the hardware key are largely up to the manufacturer, but many also come with biometric options like fingerprint readers, so you can also throw something you are into the mix.

What about when I lose the key?

Yeah, don’t do that. Kidding! Best practice is to have at least one backup key, stored in a different location. The point of the hardware key is to prevent the private keys from ever being readable from outside, which means there’s no way to simply clone a backup. You’re going to need to register each key separately with each service. Not ideal, I know, but it doesn’t have to be as tedious as it sounds either.

A common strategy is to only protect the most sensitive accounts with the hardware key directly, and to use TOTP for the rest, but to use a TOTP authenticator app that supports being locked behind the hardware key. This still provides some of the FIDO benefits (no one can access your authenticator without your key) while minimizing how often keys need to be registered with a new service.

I’m never going to remember to have this thing with me.

You don’t have a keychain? You still have options, by tethering keys to specific devices. Low-profile nano keys are available that can be left in a USB port, giving that machine a more or less permanent authentication connection. And many machines come with built-in trusted platform modules specifically for protecting this kind of information. Windows devices using Hello, Apple devices with Touch ID or Face ID, and some Android phones can all be used as authenticators.

My phone isn’t supported as an authenticator.

And the idea of plugging in a key every time I want to authenticate sounds ridiculous, let alone leaving something permanently attached to my phone.

Hardware keys also come in NFC and Bluetooth flavors. Tap to auth!

This sounds expensive.

It’s very likely at least some of the devices you use regularly already support FIDO. But yes, hardware security keys aren’t cheap. Neither are identity theft or corporate data breaches.

There, did you get it out of your system? No? Or have you already dashed off to try it? Either way, let us know!

Loved the article? Hated it? Didn’t even read it?

We’d love to hear from you.

This post provides an insightful and actionable approach to tackling online security threats! Neutralizing the biggest threat is crucial, and the strategies outlined here are both practical and effective. Kudos to the team for such a clear and comprehensive guide—it’s a must-read for anyone looking to enhance their online safety.